Sooftware NLP - Efficient Attention Paper Review

Efficient Attention: Attention with Linear Complexities

- Shen Zhuoran et al.

Abstract

- Dot-product attention은 들어오는 인풋 길이에 따라 memory & computation cost가 quadratically하게 증가함

- 어텐션 매커니즘을 조금 수정해서 memory & computation cost를 상당히 줄이는 방법 제안

Method

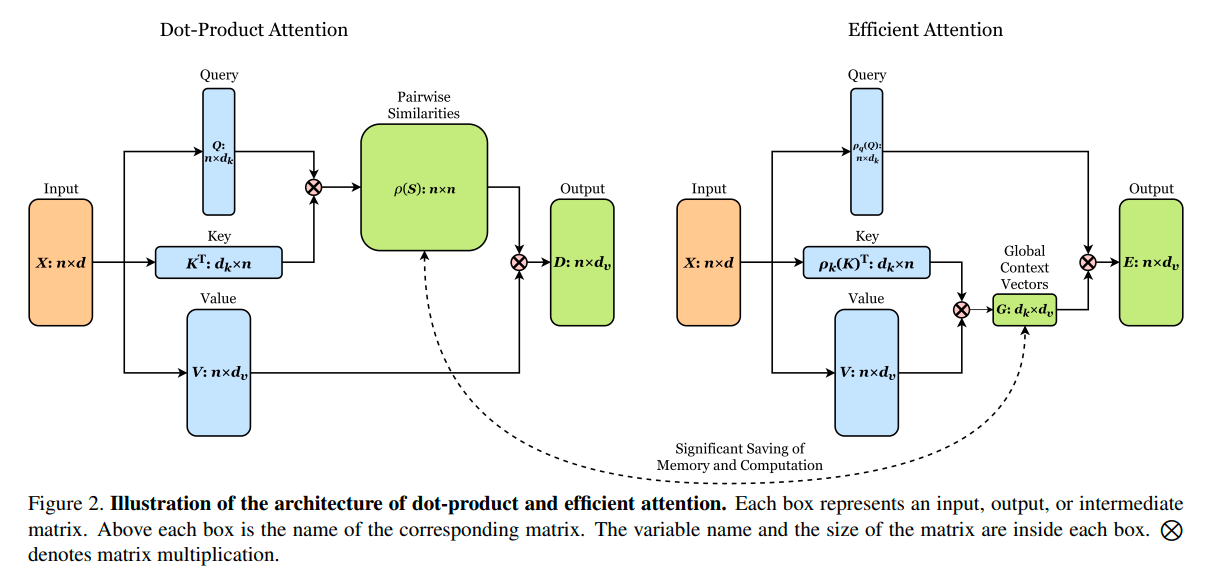

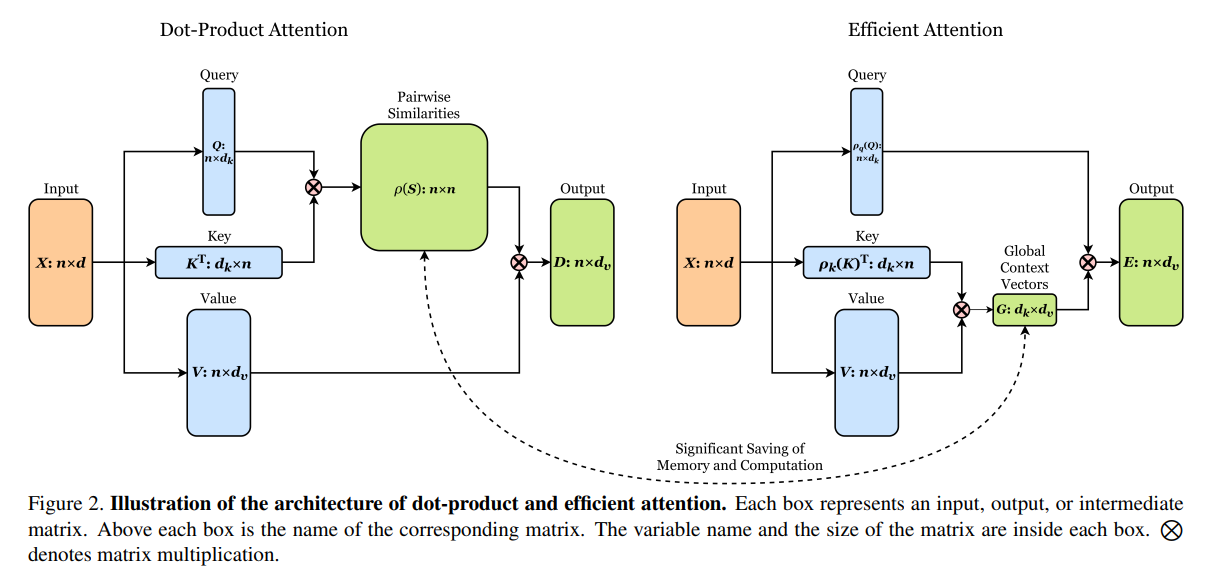

- 기존 Dot-product로 similarty를 구하는 방식과 다르게, Key와 value를 곱하는 방식 사용

- Dot-product:

- Efficient:

Experiment

- 기존 attention과 제안된 attention 비교 => 상당히 효율적으로 변한것을 확인 가능

- 성능 면에서도 더 좋은 결과가 나왔다는 표

Subscribe to SOOFTWARE

Get the latest posts delivered right to your inbox